Current insights from the research community on AI research, practice and policy need to be studied further to be inclusive of socio-technical issues such as ethics, bias, transparency, accountability and governance. Beyond these issues, we also need to embrace the impact of AI on the environment in governance discussions.

Though quite rare in the many discussions on AI, a number of key issues has been raised around the environmental and social impact of AI systems. In order for AI governance to be more comprehensive, it is important for the research community, policymakers and supporting entities to consider the following aspects:

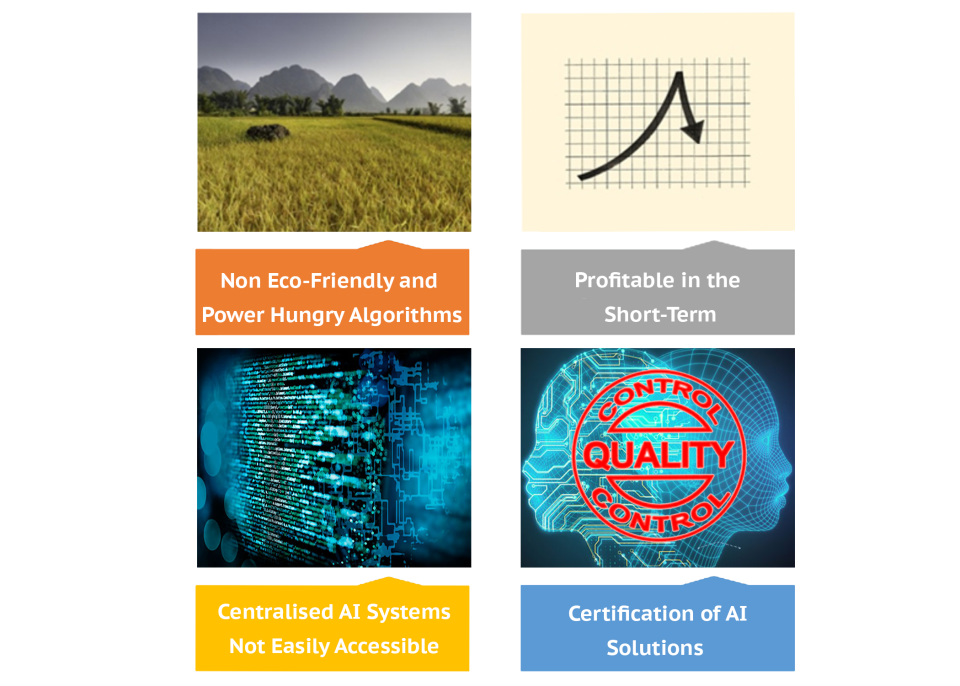

Non-Eco-Friendly and Power-Hungry Algorithms: We need to take into account the power source of computational capacity and machines that are needed to process data in AI systems. Are they renewable or sustainable sources of energy? On the social footprint, it is important to ask how many ethical violations, values, privacy and security loopholes are being created by the large amount of data powering our AI systems.

Profitable in the Short-Term: Policymakers should map the impact of machine learning solutions on the SDGs by weighing both their positive and negative effects towards the realisation of the respective goals. How much will it cost to remedy some of the effects of choosing short-term profits of business inclined AI solutions and AI monetisation over the bigger picture of ensuring a true win-win approach that is profitable and sustainable in the long run?

Centralised AI Systems Not Easily Accessible: Are AI solutions widely reachable by the majority of end-users? Given the computational power and supporting resources needed to make AI systems work and ready for consumption, currently AI solutions are only accessible to big organizations because they have the resources. This undoubtedly is creating rifts among various stakeholders. It is time that we pursued decentralised AI driven solutions rather than centralised designs to ensure that these solutions are accessible to those in the Global South.

No Certification System in Place: In order for the AI governance framework to be strengthened, AI solutions being deployed in the market need to have an automated quality check process which is standardised towards the realisation of a complete quality check and confirmation. The certification mandate will ensure due diligence is considered at all stages of the AI development life cycle.

AI has enormous potential to address challenges facing humanity and the environment but to embrace its full potential, we need to tread carefully as there are grey areas yet to be addressed. In addition to privacy and security concerns of AI, the environmental costs and social impact of current AI systems require immediate attention in policy discussions.

Suggested citation: Attlee Gamundani., "AI Governance Incomplete without Considering the Impact of Carbon and Social Footprints," UNU Macau (blog), 2020-08-21, https://unu.edu/macau/blog-post/ai-governance-incomplete-without-considering-impact-carbon-and-social-footprints.

![smart2[1].png](https://unu.edu/sites/default/files/styles/card_view_small/public/2024-04/smart2%5B1%5D.png?itok=_-ZcLOM5)